From Logic to Language

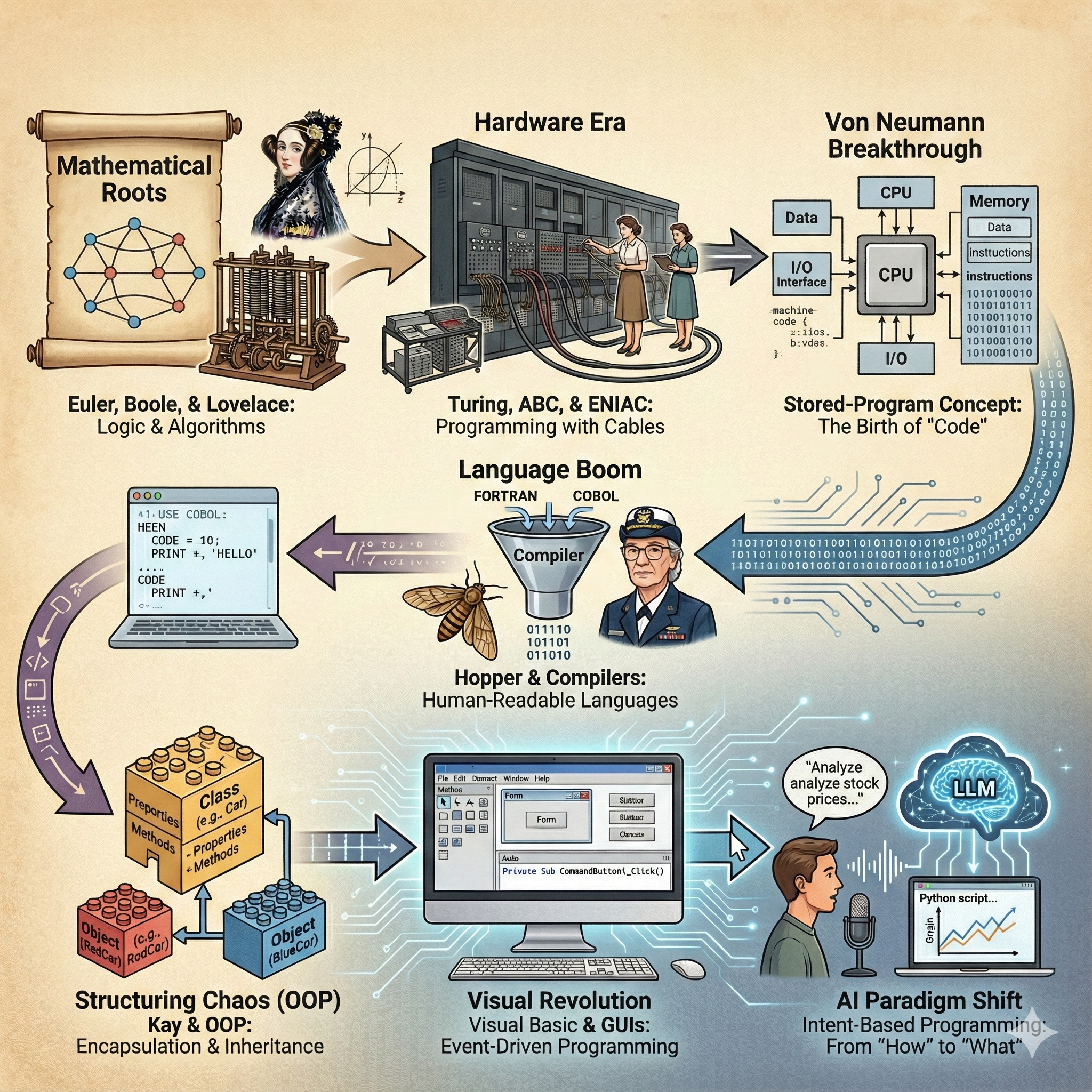

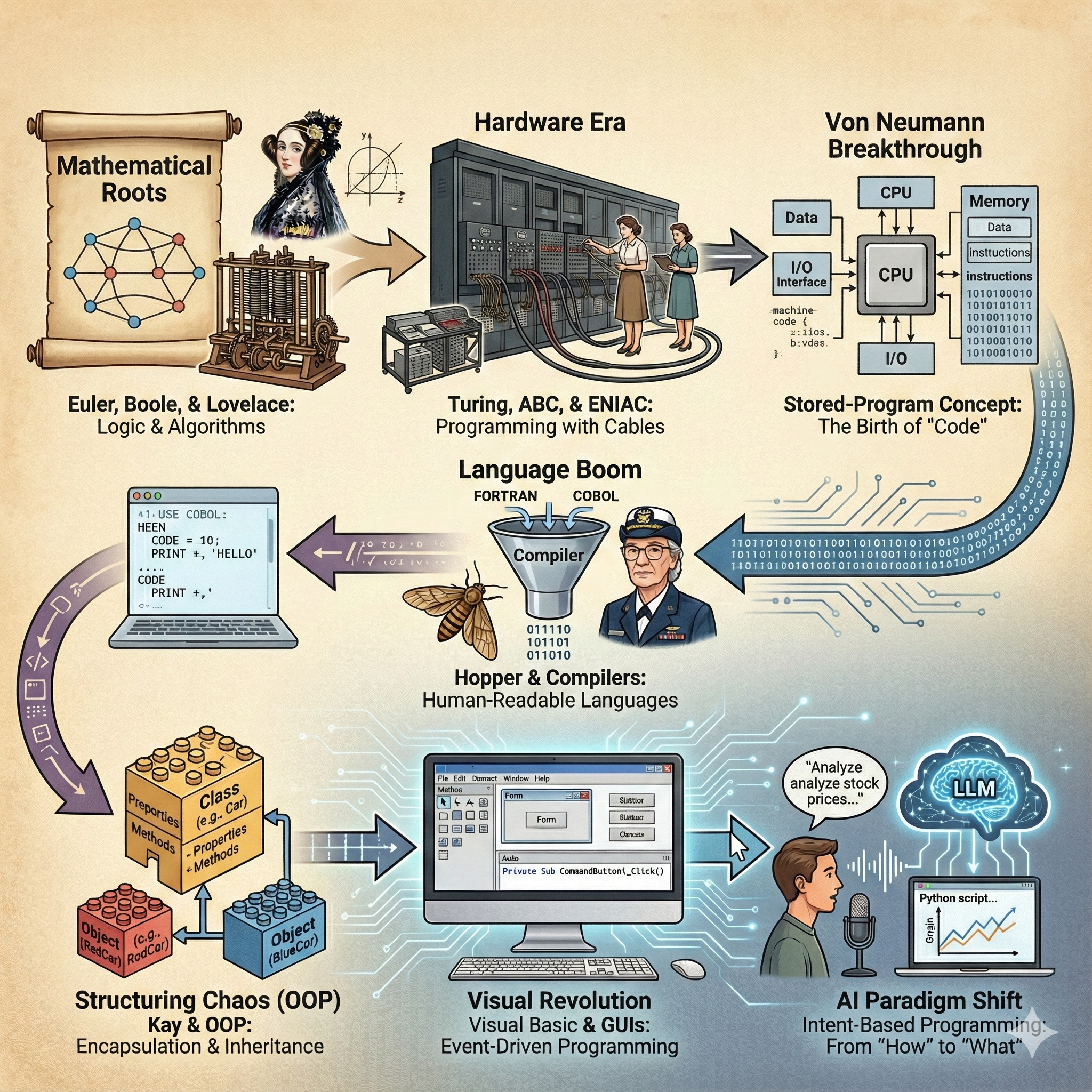

The Evolution of Programming: From Wiring to Intent

1. The Mathematical Roots

Long before silicon chips, "programming" existed as pure logic. Algorithms, the foundation block of computing, started as a branch of mathematics. In the 18th century, Leonhard Euler invented graph theory while attempting to solve the Seven Bridges of Königsberg problem. He realized that the path could be calculated without physically traversing it—an early form of algorithmic abstraction.

Later, George Boole created the basis of Boolean algebra (True/False logic). He had no idea his binary system would one day govern the digital world. However, the bridge between pure math and machinery was built by Ada Lovelace. She is widely considered the first programmer for her work on an algorithm to calculate Bernoulli numbers on Charles Babbage's theoretical Analytical Engine.

"The Analytical Engine weaves algebraic patterns just as the Jacquard loom weaves flowers and leaves."

— Ada Lovelace

The Issue Solved: This era solved the problem of defining logic rigorously. It established that reasoning could be formalized into rules (algorithms) that were independent of the physical world.

The New Issue Created: While the math was sound, it was purely theoretical. There was no machine capable of executing these complex calculations at speed. The barrier was no longer logic, but engineering.

2. The Hardware Era: Programming with Cables

Serious programming began not with keyboards, but with manual labor. During WWII, Alan Turing conceptualized the modern computer to break the Enigma encryption. With logic gates and transistors still years away, he resorted to the cumbersome wiring of chains of programmable units.

"Machines take me by surprise with great frequency." — Alan Turing

This was the first attempt at defining what a computer was capable of solving based on complexity. Turing's work laid the groundwork for "Computational Complexity Theory" and the concept of NP-completeness.

The Atanasoff–Berry computer (ABC) was the first automatic electronic digital computer. The device was limited by the technology of the day, as it was not programmable in the modern sense, but it pioneered the use of vacuum tubes for digital calculation.

The ENIAC (Electronic Numerical Integrator and Computer) realized these concepts on a massive scale. It utilized a combination of plugboard wiring and portable function tables containing 1,200 ten-way switches each.

There was no "software" as we know it. Programmers were mathematical experts—often women like Jean Bartik and Kay McNulty—who learned how to physically translate a mathematical task into a set of wired connections. To calculate a simple trajectory, they didn't type code; they plugged wires into a board, physically mapping the logic flow.

The Issue Solved: This era solved the speed of calculation. Tasks that took human computers months could now be done in seconds.

The New Issue Created: The "Programming Bottleneck." While calculation was fast, setting up the problem took days of physical rewiring. The computer was flexible, but changing its mind was arduous.

3. The Von Neumann Breakthrough

The real breakthrough came with John von Neumann. He proposed the "Stored-Program Concept" where instructions and data lived in the same memory. This architecture still powers most computers today.

Before Von Neumann, hardware and software were physically intertwined. He separated them, allowing the hardware to remain fixed while the "software" (instructions) changed dynamically. This is the moment "Code" was truly born.

Von Neumann set the stage for CODE INSTRUCTIONS. Instead of rewiring the machine, programmers could simply feed it a new tape of instructions. This birthed the era of Machine Language and Control Flow Logic (loops, branches, jumps).

B8 01 00 00 00 ; Load 1 into EAX register

05 01 00 00 00 ; Add 1 to EAX

The Issue Solved: It eliminated the physical rewiring bottleneck. Software became fluid, copyable, and distinct from hardware.

The New Issue Created: Cognitive Load. Machine language (binary/hex) was optimized for the CPU, not the human brain. Programmers had to memorize obscure opcodes, leading to slow development and frequent errors.

4. The Humanization of Code

As programming became more in demand, we needed to speak to the machine in English. This led to the first high-level languages like Fortran (Formula Translation) for scientists and COBOL for business.

A key figure in this era was Grace Hopper, who popularized the idea of machine-independent programming languages. She famously carried a piece of wire to explain what a nanosecond looked like, emphasizing the need for efficiency.

"The most dangerous phrase in the language is, 'We've always done it this way.'" — Grace Hopper

PROGRAM HELLO

PRINT *, 'HELLO WORLD'

STOP

ENDThese languages introduced the concept of a Compiler—a translator that turns human-readable text into the machine code the processor understands.

The Issue Solved: Accessibility. Scientists and business managers could now write programs without understanding the electrical engineering of the computer.

The New Issue Created: Complexity Management. As it became easier to write code, programs grew massive. Without structure, they became "Spaghetti Code"—a tangled mess of GOTO statements that was impossible to debug or maintain.

5. Structuring the Chaos

As tasks became more complex (operating systems, banking networks), simple lists of instructions failed. We needed better architecture. This led to Object-Oriented Programming (OOP). Computer scientist Alan Kay, who coined the term, envisioned software as biological cells—independent units communicating with each other.

OOP introduced concepts like inheritance and encapsulation. Programmers could create "Black Boxes"—reusable objects (like a "User" or "Window") that hid their internal complexity.

"I thought of objects being like biological cells and/or individual computers on a network, only able to communicate with messages." — Alan Kay

class Car {

public:

string brand;

void honk() {

cout << "Beep beep!";

}

};The Issue Solved: Scalability. Large teams could work on the same software without breaking each other's code, enabling the creation of massive systems like Windows or the Internet infrastructure.

The New Issue Created: The "Expert Gap." While scalable, these languages (C++, Java) were verbose and abstract. Programming was still a niche skill reserved for those with formal computer science training.

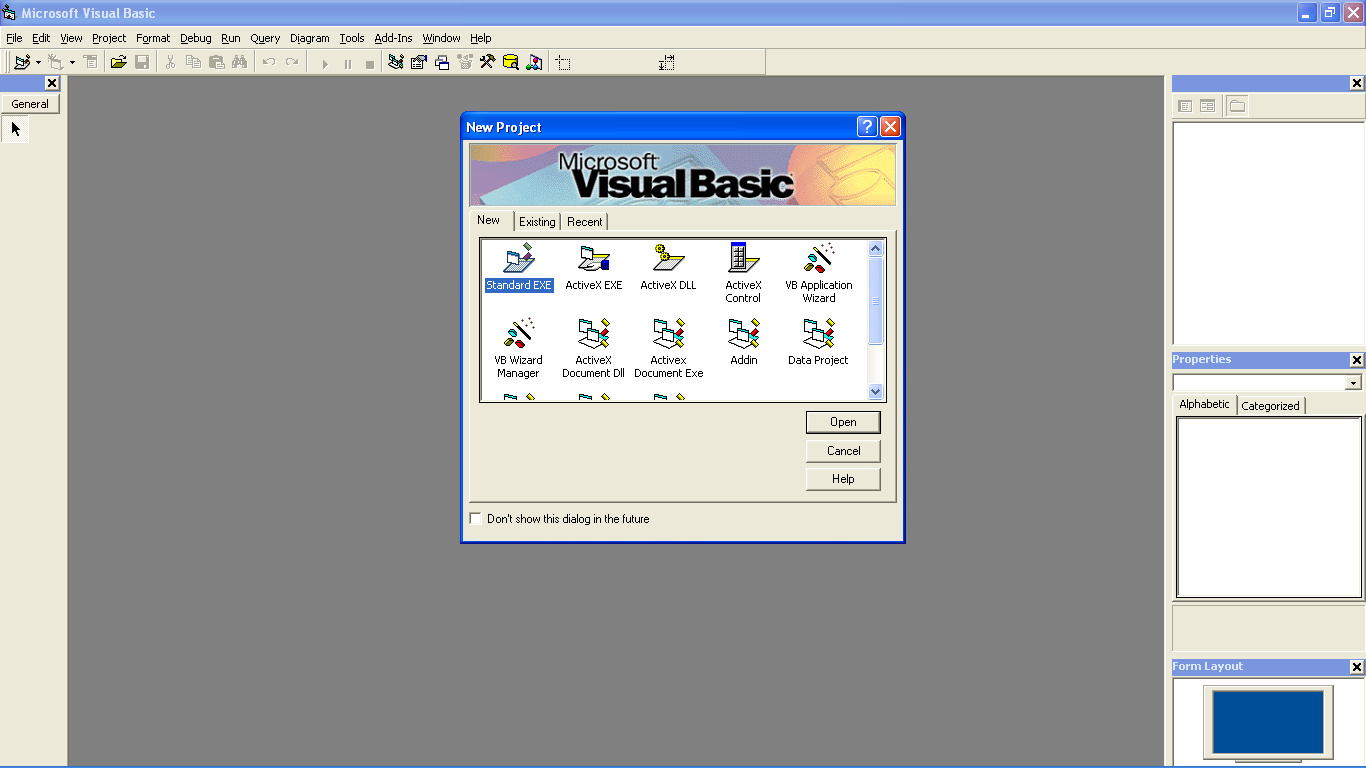

6. The Visual Revolution

The PC era brought the Graphical User Interface (GUI). Programming moved from the command line to the canvas. Visual Basic democratized programming by allowing users to drag-and-drop buttons to create apps.

This was a shift from Procedural to Event-Driven programming. The program didn't just run top-to-bottom; it waited for the user to do something (click, type, hover).

Private Sub CommandButton1_Click()

MsgBox "Welcome to the Visual Era!"

End SubThe Issue Solved: Democratization. It lowered the barrier to entry significantly. "User Friendly" became the standard, and non-programmers could finally build their own tools.

The New Issue Created: The "Syntax Barrier" remained. Even with visual tools, you still had to write rigid logic. A single missing semicolon or misspelled command would crash the program. The computer still didn't understand what you wanted, only how you typed it.

7. The AI Paradigm Shift

Today, we are witnessing the biggest shift since Von Neumann. Intent-Based Programming via Large Language Models (LLMs) means we no longer tell the machine how to do something step-by-step, but what we want the outcome to be.

Machine Learning algorithms can "learn" from all past programming practices. LLMs are capable of understanding intent, fixing badly formatted requests, and generating complex logic from simple prompts. We are moving from Deterministic (if X then Y) to Probabilistic (based on context, X likely means Y).

"Analyze the attached CSV file of stock prices.

Identify the moving average crossover points,

and generate a Python script to plot these using Matplotlib."The Issue Solved: The Translation Gap. For the first time, humans can speak to computers in natural language. The barrier of syntax errors and rote memorization is gone.

The New Issue Created: Trust and Verification. Because we are no longer writing every line, we must learn how to verify that the AI's output is correct. Programming is shifting from "Writing" to "Reviewing" and "Architecting."

References

- Seven Bridges of Königsberg - Wikipedia

- Atanasoff–Berry Computer - Wikipedia

- ENIAC History - Wikipedia

- NP-completeness - Wikipedia

- John von Neumann - Wikipedia